Major Breakthroughs in AI Data Compression

The highly anticipated release of Google TurboQuant fundamentally changes how developers will deploy large language models. This compression algorithm shrinks an AI's working memory by a factor of 6x with absolutely zero loss in accuracy. Industry observers are comparing Google TurboQuant to a revolutionary plumbing upgrade, as it promises to drastically reduce infrastructure costs across cloud networks. By addressing the memory bottleneck directly, developers can deploy significantly heavier context windows on limited hardware.

Alongside this breakthrough, Google deployed the new Gemini 3.1 Flash Live architecture. This real-time voice model focuses on low-latency, natural dialogue and is currently active across developer APIs, consumer tools, and the global Search Live rollout. Serving over 90 languages natively, it has already been integrated by enterprise clients like Home Depot and Verizon. Furthermore, Google enabled a full chat history and memory import tool, allowing users to port zip files of preferences from competing models into the upgraded Gemini platform ecosystem.

Scientific Computing and Simulation Frameworks

Meta took a completely different approach to intelligence today by open-sourcing Meta TRIBE v2. Instead of building an agent, this system simulates actual neural activity across vision, hearing, and language, trained on brain scans from over 700 individuals. Spanning 70,000 distinct brain regions, its synthetic predictions outperformed raw fMRI recordings, which often suffer from physical noise. Meta has released the code and weights, allowing neuroscientists to conduct software-based experiments without expensive medical imaging equipment.

In the research and code deployment sector, the team behind the popular Cursor development environment introduced a technique called real-time RL. By leveraging real inference tokens from production deployments, they capture live user responses as reward signals to train Composer. This aggressive feedback loop enables the platform to ship noticeably improved iterations of its code generation model as frequently as every five hours.

Innovations in Voice, Audio, and Video Generation

The audio generation landscape became remarkably competitive today with three major open-source and proprietary releases. These new platforms prioritize low latency and specialized functionality over generalized generation.

Model Name | Developer | Key Feature Specialization |

|---|---|---|

Mistral Voxtral TTS | Mistral | 4B parameter multilingual voice clone from 3-second clips. |

Cohere Transcribe | Cohere | Open-source ASR hitting top accuracy on Hugging Face. |

Suno v5.5 | Suno | Voice cloning and personalized style learning. |

Mistral Voxtral TTS is heavily optimized for fast deployment in voice agents, capable of running smoothly on just 3GB of RAM across nine languages. Similarly, Cohere Transcribe targets production-level enterprise transcription, prioritizing remarkably low word error rates. In competitive benchmarks, 61% of human listeners also preferred Rime AI's voice models over major competitors, proving that capturing natural rhythm and linguistic variability remains a highly contested frontier.

For visual generation, CapCut rolled out Dreamina Seedance 2.0 to its global paid tier, delivering enhanced video and audio safeguards within the timeline editor. Traffic metrics from the past year additionally reveal that Grok currently holds the largest standalone traffic share within the dedicated AI video generation sector.

Enterprise Agents and Infrastructure Orchestration

Specialized agentic tools are rapidly replacing generic chatbot interfaces. Intercom's customer service agent, Fin, currently handles 2 million issues weekly and operates at a scale generating $100 million in recurring revenue. Union Square Ventures (USV) also showcased internal agents that ingest calendar and email data to function as a live CRM, utilizing user feedback directly inside email threads to correct behaviors.

Search capabilities saw a massive open-source injection with Chroma Context-1, a 20B parameter model designed strictly for self-editing agentic search. It fragments complex queries, selectively discards irrelevant results, and separates raw retrieval from generation. For developers managing these complex systems, Cline Kanban launched as a CLI-agnostic project board to orchestrate dependencies visually.

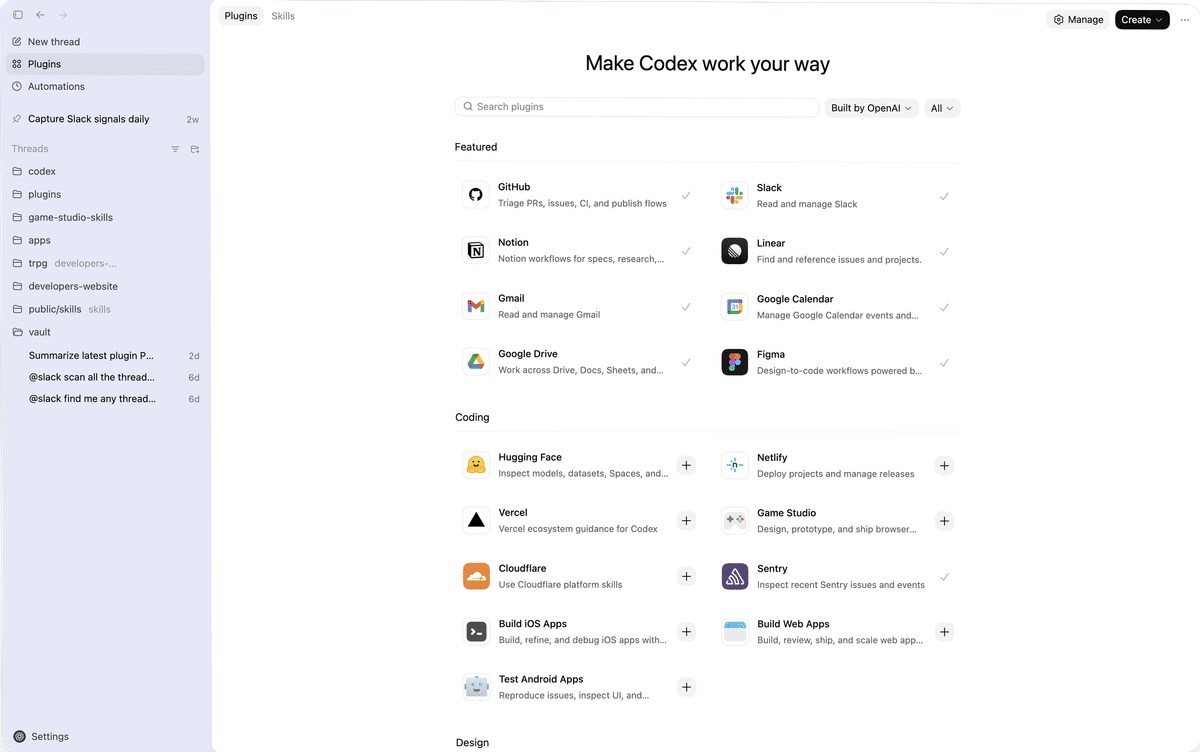

Finally, OpenAI launched highly requested Codex plugins, linking natively with Slack, Notion, and Figma to handle multi-platform coordination.

Shopify released Tinker, granting mobile access to product photography generation, while Ramp introduced its Ramp CLI for managing corporate expenses via autonomous agents. Developers looking to host these setups can utilize Stripe Projects for instant command-line database provisioning, while Scrunch provides intelligent site audits, and Codebase-to-Course automatically translates raw repositories into interactive HTML tutorials. Users can verify hardware compatibility for any local models using the new CanIRun.ai browser scanner.